Nvidia (NASDAQ: NVDA) closed at $180.25, falling 1.59%, but the dip barely changes the massive story unfolding behind the company. Nvidia has become the backbone of the global artificial intelligence boom, with its GPUs powering everything from large language models to enterprise AI services. While investors often focus on Nvidia’s chips themselves, the broader AI infrastructure boom shows that building the world’s data centers requires far more than GPUs alone.

The company’s growth over the past few years has been staggering. Nvidia’s revenue surged from $26.9 billion in 2022 to $215.9 billion in 2025, and analysts expect revenue to potentially reach $358.7 billion in 2026 as hyperscalers accelerate spending on AI infrastructure. Since the launch of ChatGPT in November 2022, Nvidia’s stock has skyrocketed nearly 990%, cementing its role as the most important semiconductor company in the AI revolution.

Nvidia at the center of the global AI spending boom

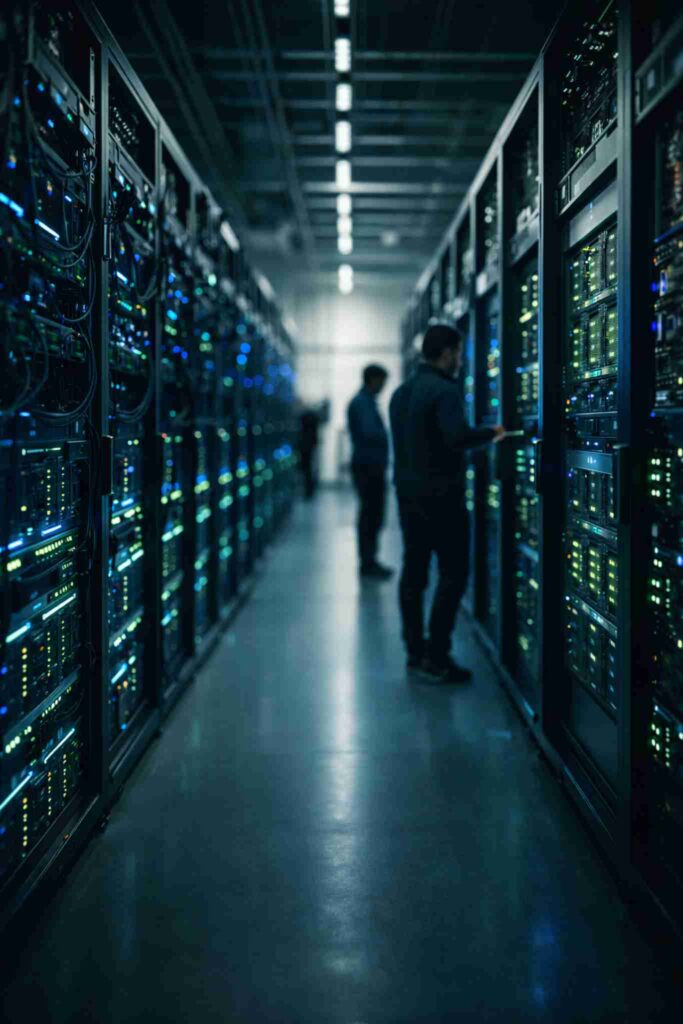

Today, the largest technology companies in the world are racing to build AI-powered infrastructure, and nearly all of them rely heavily on Nvidia hardware. Companies such as Microsoft, Amazon, Google, and Meta are spending billions of dollars on new data centers designed specifically for AI workloads.

Nvidia’s GPUs serve as the computational engines inside those facilities. These processors handle the massive workloads required to train and run AI models, enabling everything from advanced search engines to generative AI tools used by enterprises and consumers worldwide.

But despite the critical role Nvidia chips play, the company does not actually build the data centers where those processors operate.

The companies that turn Nvidia chips into AI infrastructure

While Nvidia develops the GPUs and core technologies used in AI computing, the servers and large-scale systems that power AI models are typically built by partners such as Dell, Hewlett Packard Enterprise (HPE), and large manufacturing partners like Foxconn.

These companies assemble complete server systems that include Nvidia GPUs, networking hardware, memory, and storage. Those servers are then installed in racks that fill entire data centers.

Chris Davidson, vice president of high-performance computing and AI customer solutions at HPE, explained that Nvidia supplies critical building blocks like GPUs, data processing units, networking cards, and the software required to operate them. However, those components must still be integrated into complete solutions before they can power AI workloads.

According to Davidson, without system integrators assembling the infrastructure and tailoring it to customer requirements, the hardware alone would simply be disconnected components.

The long journey from chip to data center

The process of building AI infrastructure begins long before a server is ever installed. Nvidia GPUs are manufactured by Taiwan Semiconductor Manufacturing Company (TSMC), one of the world’s largest semiconductor foundries.

After production, those chips are sent to manufacturers that assemble them into server blades and full AI server systems. The completed servers are then delivered to data centers where they are installed into racks and connected to networking systems.

From there, the infrastructure becomes the backbone for AI services used by companies such as OpenAI, Google, Microsoft, and Meta.

This complex supply chain highlights how the AI boom extends far beyond a single company. Nvidia provides the core technology, but an entire ecosystem of partners helps transform that technology into operational AI infrastructure.

Planning data centers starts long before installation

Another critical detail often overlooked is the amount of planning required before AI servers can be deployed.

Companies building AI data centers frequently begin working with infrastructure providers months or even years before systems arrive. Engineers must analyze the location, available electrical power, cooling capacity, and network architecture required to support high-performance computing environments.

Davidson noted that companies are often involved in planning stages even before a new data center facility is fully constructed.

This early collaboration ensures that facilities can handle the enormous power and cooling requirements of AI hardware. High-performance GPUs generate significant heat and require specialized infrastructure to operate efficiently.

Teams of engineers design every data center

Arthur Lewis, president of infrastructure at Dell, explained that building AI infrastructure requires teams of experts working together. These teams include data center architects, network engineers, thermal specialists, compute architects, and storage experts.

Each customer’s requirements are different, meaning no two AI data centers are exactly the same.

Different AI workloads demand different system configurations. Training large models may require massive clusters of GPUs working together, while inference workloads might prioritize efficiency and lower latency.

Because of these variations, infrastructure providers work closely with customers to design systems optimized for specific software environments and business needs.

Deploying AI infrastructure faster than ever

Speed has become a critical factor in the AI arms race. The longer expensive GPU systems remain inactive, the more money companies lose on their investments.

Dell has developed deployment strategies designed to bring AI infrastructure online quickly. In some cases, entire server racks arrive preconfigured and ready for installation.

According to Lewis, trucks can deliver racks directly to a data center where they are unloaded with forklifts, placed into position, connected to power and networking systems, and brought online within 24 hours.

In one major deployment, Dell worked with a customer to install 100,000 GPUs in just six weeks — an extraordinary pace compared to traditional data center rollouts.

Nvidia’s biggest advantage may be software

Beyond its hardware leadership, Nvidia also holds a powerful advantage through its software ecosystem.

The company’s CUDA platform allows developers to fully utilize the processing power of Nvidia GPUs. Over the years, CUDA has become one of the most widely used platforms for accelerated computing and AI development.

Justin Boitano, Nvidia’s vice president of enterprise platforms, noted that a large portion of Nvidia’s workforce consists of software engineers dedicated to improving developer tools, documentation, and frameworks.

This focus on software has helped Nvidia build a developer ecosystem that encourages companies to continue building AI applications on its platform.

The next phase of the AI infrastructure race

Despite the stock’s 1.59% decline to $180.25, Nvidia remains one of the biggest beneficiaries of the AI revolution. The company sits at the center of an expanding ecosystem that includes chip manufacturing, server builders, hyperscale cloud providers, and enterprise developers.

As the AI industry continues scaling, the demand for powerful computing infrastructure is expected to grow rapidly.

Nvidia is expected to reveal additional hardware and software developments at its upcoming GTC conference in San Jose, where the company typically unveils its latest technologies shaping the future of AI computing.

If AI adoption continues accelerating across industries, the infrastructure buildout powering that transformation could keep Nvidia and its ecosystem of partners at the center of the technology industry for years to come.

You may also like: BP Stock in Focus After $5 Billion Gulf Oil Project Approval at Kaskida